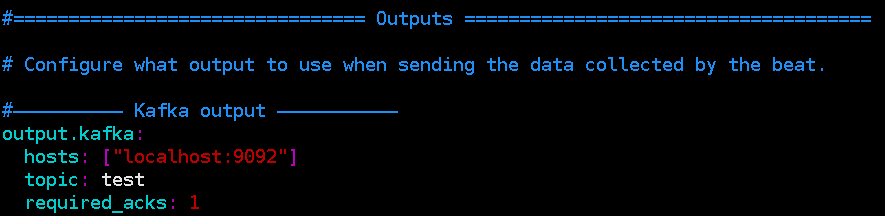

# Enable debug output for selected components. # The name of the files where the logs are written to. # The directory where the log files will written to. The only Kafka ecosystem feature I'm aware that can help you do something like that is Kstreams (but you have to know how to develop using Kstreams API) or using another Confluent piece of software called KSQL that allows to do SQL Stream Processing on top of Kafka Topics which is more oriented to Analytics (i. # To enable logging to files, to_files option has to be set to true Pipeline configuration will include the information about your input (kafka in our case), any filteration that needs to be done, and output (aka elasticsearch). Beats automatically rotate files if rotateeverybytes Now to install logstash, we will be adding three components. # under all other system per default to syslog. # Under Windos systems, the log files are per default sent to the file output, # There are three options for the log ouput: syslog, file, stderr. # Configure what outputs to use when sending the data collected by the beat. Registry_file: /var/lib/filebeat/registry var/log/httpd/elasticbeanstalk-access_log Apache Kafka stocke les messages reus par Filebeat et les stocke dans une file dattente (topic) Logstash est abonn un topic Kafka et reoit les messages. Here is full filebeat.yml configuration file. Here is my kafka output to muliple topics configuration. This script has been tested on the following operating systems: Ubuntu 18.04 Ubuntu 20. This folder contains a script for installing Filebeat. I can seem to find an example on how to correctly use the "topics topic condition". Filebeat monitors log directories or specific log files, tails the files, and forwards them either to Elasticsearch or to Logstash for indexing.

Does anyone know why my condition does not match or it is correct. I have tried to do it but no data is been sent to any of the topics. What I want to do is send each of the log files to seperate topics based on a condition see documentation section on topics.

When processing logs generated by a large number of servers, virtual machines and containers, the log collection method of Logstash + Filebeat can be used. # initial brokers for reading cluster metadata Filebeat is a lightweight log collector launched by Elastic company to solve the problem of 'too heavy' Logstash. My Filebeat output configuration to one topic - Working output.kafka: I installed Filebeat 5.0 on my app server and have 3 Filebeat prospectors, each of the prospector are pointing to different log paths and output to one kafka topic called myapp_applog and everything works fine.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed